HTML5

A vocabulary and associated APIs for HTML and XHTML

A vocabulary and associated APIs for HTML and XHTML

img elementusemap attribute: Interactive content.alt - Replacement text for use when images are not availablesrc - Address of the resourcecrossorigin - How the element handles crossorigin requestsusemap - Name of image map to use ismap - Whether the image is a server-side image mapwidth - Horizontal dimensionheight - Vertical dimensionpresentation role only, for an

img element whose alt attribute's value is empty (alt=""), otherwise

any role value.aria-* attributes

applicable to the allowed roles.[NamedConstructor=Image(optional unsigned long width, optional unsigned long height)]

interface HTMLImageElement : HTMLElement {

attribute DOMString alt;

attribute DOMString src;

attribute DOMString crossOrigin;

attribute DOMString useMap;

attribute boolean isMap;

attribute unsigned long width;

attribute unsigned long height;

readonly attribute unsigned long naturalWidth;

readonly attribute unsigned long naturalHeight;

readonly attribute boolean complete;

};

An img element represents an image.

The image given by the src

attributes is the embedded content; the value of

the alt attribute provides equivalent content for

those who cannot process images or who have image loading disabled.

The requirements on the alt attribute's value are described

in the next section.

The src attribute must be present, and must contain a

valid non-empty URL potentially surrounded by spaces referencing a non-interactive,

optionally animated, image resource that is neither paged nor scripted.

The requirements above imply that images can be static bitmaps (e.g. PNGs, GIFs, JPEGs), single-page vector documents (single-page PDFs, XML files with an SVG root element), animated bitmaps (APNGs, animated GIFs), animated vector graphics (XML files with an SVG root element that use declarative SMIL animation), and so forth. However, these definitions preclude SVG files with script, multipage PDF files, interactive MNG files, HTML documents, plain text documents, and so forth. [PNG] [GIF] [JPEG] [PDF] [XML] [APNG] [SVG] [MNG]

The img element must not be used as a layout tool. In particular, img

elements should not be used to display transparent images, as such images rarely convey meaning and

rarely add anything useful to the document.

The crossorigin attribute is a CORS

settings attribute. Its purpose is to allow images from third-party sites that allow

cross-origin access to be used with canvas.

An img is always in one of the following states:

When an img element is either in the partially

available state or in the completely available state, it is

said to be available.

An img element is initially unavailable.

When an img element is available, it

provides a paint source whose width is the image's intrinsic width, whose height is

the image's intrinsic height, and whose appearance is the intrinsic appearance of the image.

In a browsing context where scripting is disabled, user agents may obtain images immediately or on demand. In a browsing context where scripting is enabled, user agents must obtain images immediately.

A user agent that obtains images immediately must synchronously update the image

data of an img element whenever that element is created with a src attribute.

A user agent that obtains images immediately must also synchronously

update the image data of an img element whenever that element has its

src or crossorigin attribute set, changed, or removed.

A user agent that obtains images on demand must update the image data of an

img element whenever it needs the image data (i.e. on demand), but only if the

img element has a src

attribute, and only if the img element is in the

unavailable state. When an img element's src or crossorigin attribute set, changed, or removed, if the user

agent only obtains images on demand, the img element must return to the unavailable state.

Each img element has a last selected source, which must initially be

null, and a current pixel density, which must initially be undefined.

When an img element has a current pixel density that is not 1.0, the

element's image data must be treated as if its resolution, in device pixels per CSS pixels, was

the current pixel density.

For example, if the current pixel density is 3.125, that means that there are 300 device pixels per CSS inch, and thus if the image data is 300x600, it has an intrinsic dimension of 96 CSS pixels by 192 CSS pixels.

Each Document object must have a list of available images. Each image

in this list is identified by a tuple consisting of an absolute URL, a CORS

settings attribute mode, and, if the mode is not No

CORS, an origin. User agents may copy entries from one Document

object's list of available images to another at any time (e.g. when the

Document is created, user agents can add to it all the images that are loaded in

other Documents), but must not change the keys of entries copied in this way when

doing so. User agents may also remove images from such lists at any time (e.g. to save

memory).

When the user agent is to update the image data of an img element, it

must run the following steps:

Return the img element to the unavailable

state.

If an instance of the fetching algorithm is still running for this element, then abort that algorithm, discarding any pending tasks generated by that algorithm.

Forget the img element's current image data, if any.

If the user agent cannot support images, or its support for images has been disabled, then abort these steps.

src attribute specified and

its value is not the empty string, let selected source be the value of the

element's src attribute, and selected pixel

density be 1.0. Otherwise, let selected source be null and selected pixel density be undefined.

Let the img element's last selected source be selected source and the img element's current pixel

density be selected pixel density.

If selected source is not null, run these substeps:

Resolve selected source, relative to the element. If that is not successful, abort these steps.

Let key be a tuple consisting of the resulting absolute

URL, the img element's crossorigin

attribute's mode, and, if that mode is not No CORS,

the Document object's origin.

If the list of available images contains an entry for key, then set the img element to the completely

available state, update the presentation of the image appropriately, queue a

task to fire a simple event named load at the

img element, and abort these steps.

Asynchronously await a stable state, allowing the task that invoked this algorithm to continue. The synchronous section consists of all the remaining steps of this algorithm until the algorithm says the synchronous section has ended. (Steps in synchronous sections are marked with ⌛.)

⌛ If another instance of this algorithm for this img element was started

after this instance (even if it aborted and is no longer running), then abort these steps.

Only the last instance takes effect, to avoid multiple requests when, for

example, the src

and crossorigin attributes are all set in

succession.

⌛ If selected source is null, then set the element to the broken state, queue a task to fire a simple

event named error at the img element, and

abort these steps.

⌛ Queue a task to fire a progress event named loadstart at

the img element.

⌛ Do a potentially CORS-enabled fetch of the absolute

URL that resulted from the earlier step, with the mode being the current state of the

element's crossorigin content attribute, the origin being the origin of the img element's

Document, and the default origin behaviour set to taint.

The resource obtained in this fashion, if any, is the img element's image data.

It can be either CORS-same-origin or CORS-cross-origin; this affects

the origin of the image itself (e.g. when used on a canvas).

Fetching the image must delay the load event of the element's document until the task that is queued by the networking task source once the resource has been fetched (defined below) has been run.

This, unfortunately, can be used to perform a rudimentary port scan of the user's local network (especially in conjunction with scripting, though scripting isn't actually necessary to carry out such an attack). User agents may implement cross-origin access control policies that are stricter than those described above to mitigate this attack, but unfortunately such policies are typically not compatible with existing Web content.

If the resource is CORS-same-origin, each task

that is queued by the networking task source

while the image is being fetched must fire a progress

event named progress at the img

element.

End the synchronous section, continuing the remaining steps asynchronously, but without missing any data from the fetch algorithm.

As soon as possible, jump to the first applicable entry from the following list:

multipart/x-mixed-replaceThe next task that is queued by the networking task source while the image is being fetched must set the img element's state to partially available.

Each task that is queued by the networking task source while the image is being fetched must update the presentation of the image, but as each new

body part comes in, it must replace the previous image. Once one body part has been completely

decoded, the user agent must set the img element to the completely available state and queue a task to fire

a simple event named load at the img

element.

The progress and loadend events are not fired for

multipart/x-mixed-replace image streams.

The next task that is queued by the networking task source while the image is being fetched must set the img element's state to partially available.

That task, and each subsequent task, that is queued by the networking task source while the image is being fetched must update the presentation of the image appropriately (e.g. if the image is a progressive JPEG, each packet can improve the resolution of the image).

Furthermore, the last task that is queued by the networking task source once the resource has been fetched must additionally run the steps for the matching entry in the following list:

Set the img element to the completely

available state.

Add the image to the list of available images using the key key.

If the resource is CORS-same-origin: fire a progress event

named load at the img element.

If the resource is CORS-cross-origin: fire a simple event named

load at the img element.

If the resource is CORS-same-origin: fire a progress event

named loadend at the img element.

If the resource is CORS-cross-origin: fire a simple event named

loadend at the img element.

If the resource is CORS-same-origin: fire a progress event

named load at the img element.

If the resource is CORS-cross-origin: fire a simple event named

load at the img element.

If the resource is CORS-same-origin: fire a progress event

named loadend at the img element.

If the resource is CORS-cross-origin: fire a simple event named

loadend at the img element.

Either the image data is corrupted in some fatal way such that the image dimensions cannot

be obtained, or the image data is not in a supported file format; the user agent must set the

img element to the broken state, abort the fetching algorithm, discarding any pending tasks generated by that algorithm, and then queue a

task to first fire a simple event named error at the img element and then fire a simple

event named loadend at the img

element.

While a user agent is running the above algorithm for an element x, there

must be a strong reference from the element's Document to the element x, even if that element is not in its

Document.

When an img element is in the completely available

state and the user agent can decode the media data without errors, then the

img element is said to be fully decodable.

Whether the image is fetched successfully or not (e.g. whether the response code was a 2xx code or equivalent) must be ignored when determining the image's type and whether it is a valid image.

This allows servers to return images with error responses, and have them displayed.

The user agent should apply the image sniffing rules to determine the type of the image, with the image's associated Content-Type headers giving the official type. If these rules are not applied, then the type of the image must be the type given by the image's associated Content-Type headers.

User agents must not support non-image resources with the img element (e.g. XML

files whose root element is an HTML element). User agents must not run executable code (e.g.

scripts) embedded in the image resource. User agents must only display the first page of a

multipage resource (e.g. a PDF file). User agents must not allow the resource to act in an

interactive fashion, but should honor any animation in the resource.

This specification does not specify which image types are to be supported.

What an img element represents depends on the src attribute and the alt

attribute.

src attribute is set and the alt attribute is set to the empty stringThe image is either decorative or supplemental to the rest of the content, redundant with some other information in the document.

If the image is available and the user agent is configured to display that image, then the element represents the element's image data.

Otherwise, the element represents nothing, and may be omitted completely from the rendering. User agents may provide the user with a notification that an image is present but has been omitted from the rendering.

src attribute is set and the alt attribute is set to a value that isn't emptyThe image is a key part of the content; the alt attribute

gives a textual equivalent or replacement for the image.

If the image is available and the user agent is configured to display that image, then the element represents the element's image data.

Otherwise, the element represents the text given by the alt attribute. User agents may provide the user with a notification

that an image is present but has been omitted from the rendering.

src attribute is set and the alt attribute is notThere is no textual equivalent of the image available.

If the image is available and the user agent is configured to display that image, then the element represents the element's image data.

Otherwise, the user agent should display some sort of indicator that there is an image that is not being rendered, and may, if requested by the user, or if so configured, or when required to provide contextual information in response to navigation, provide caption information for the image, derived as follows:

If the image is a descendant of a figure element that has a child

figcaption element, and, ignoring the figcaption element and its

descendants, the figure element has no Text node descendants other

than inter-element whitespace, and no embedded content descendant

other than the img element, then the contents of the first such

figcaption element are the caption information; abort these steps.

There is no caption information.

src attribute is not set and either the alt attribute is set to the empty string or the alt attribute is not set at allThe element represents nothing.

The element represents the text given by the alt attribute.

The alt attribute does not represent advisory information.

User agents must not present the contents of the alt attribute

in the same way as content of the title attribute.

While user agents are encouraged to repair cases of missing alt attributes, authors must not rely on such behavior. Requirements for providing text to act as an alternative for images are described

in detail below.

The contents of img elements, if any, are ignored for the purposes of

rendering.

The usemap attribute,

if present, can indicate that the image has an associated

image map.

The ismap

attribute, when used on an element that is a descendant of an

a element with an href attribute, indicates by its

presence that the element provides access to a server-side image

map. This affects how events are handled on the corresponding

a element.

The ismap attribute is a

boolean attribute. The attribute must not be specified

on an element that does not have an ancestor a element

with an href attribute.

The img element supports dimension

attributes.

The alt, src

IDL attributes must reflect the

respective content attributes of the same name.

The crossOrigin IDL attribute must

reflect the crossorigin content attribute,

limited to only known values.

The useMap IDL attribute must

reflect the usemap content attribute.

The isMap IDL attribute must reflect

the ismap content attribute.

width [ = value ]height [ = value ]These attributes return the actual rendered dimensions of the image, or zero if the dimensions are not known.

They can be set, to change the corresponding content attributes.

naturalWidthnaturalHeightThese attributes return the intrinsic dimensions of the image, or zero if the dimensions are not known.

completeReturns true if the image has been completely downloaded or if no image is specified; otherwise, returns false.

Image( [ width [, height ] ] )Returns a new img element, with the width and height attributes set to the values

passed in the relevant arguments, if applicable.

The IDL attributes width and height must return the rendered width and height of the

image, in CSS pixels, if the image is being rendered, and is being rendered to a

visual medium; or else the intrinsic width and height of the image, in CSS pixels, if the image is

available but not being rendered to a visual medium; or else 0, if

the image is not available. [CSS]

On setting, they must act as if they reflected the respective content attributes of the same name.

The IDL attributes naturalWidth and naturalHeight must return the intrinsic width and

height of the image, in CSS pixels, if the image is available, or

else 0. [CSS]

The IDL attribute complete must return true if

any of the following conditions is true:

src attribute and the srcset attribute are omitted.

srcset attribute is omitted and the src attribute's value is the empty string.

img element is completely available.

img element is broken.

Otherwise, the attribute must return false.

The value of complete can thus change while

a script is executing.

A constructor is provided for creating HTMLImageElement objects (in addition to

the factory methods from DOM such as createElement()): Image(width, height).

When invoked as a constructor, this must return a new HTMLImageElement object (a new

img element). If the width argument is present, the new object's

width content attribute must be set to width. If the height argument is also present, the new object's

height content attribute must be set to height. The element's document must be the active document of the

browsing context of the Window object on which the interface object of

the invoked constructor is found.

Text alternatives, [WCAG] are a primary way of making visual information accessible, because they can be rendered through any sensory modality (for example, visual, auditory or tactile) to match the needs of the user. Providing text alternatives allows the information to be rendered in a variety of ways by a variety of user agents. For example, a person who cannot see a picture can have the text alternative read aloud using synthesized speech.

The alt attribute on images is a very important accessibility attribute

(it is supported by all browsers, most authoring tools and is the most well known accessibility

technique among authors). Useful alt attribute content enables users who are unable

to view images on a page to comprehend and make use of that page as much as those who can.

Except where otherwise specified, the alt attribute must be specified and its value must not be empty;

the value must be an appropriate functional replacement for the image. The specific requirements for the alt attribute content

depend on the image's function in the page, as described in the following sections.

To determine an appropriate text alternative it is important to think about why an image is being included in a page. What is its purpose? Thinking like this will help you to understand what is important about the image for the intended audience. Every image has a reason for being on a page, because it provides useful information, performs a function, labels an interactive element, enhances aesthetics or is purely decorative. Therefore, knowing what the image is for, makes writing an appropriate text alternative easier.

When an a element that is a hyperlink, or a button element, has no text content

but contains one or more images, include text in the alt attribute(s) that together convey the purpose of the link or button.

In this example, a user is asked to pick her preferred color

from a list of three. Each color is given by an image, but for

users who cannot view the images,

the color names are included within the alt attributes of the images:

![]()

<ul> <li><a href="red.html"><img src="red.jpeg" alt="Red"></a></li> <li><a href="green.html"><img src="green.jpeg" alt="Green"></a></li> <li><a href="blue.html"><img src="blue.jpeg" alt="Blue"></a></li> </ul>

In this example, a link contains a logo. The link points to the W3C web site from an external site. The text alternative is a brief description of the link target.

![]()

<a href="http://w3.org"> <img src="images/w3c_home.png" width="72" height="48" alt="W3C web site"> </a>

This example is the same as the previous example, except that the link is on the W3C web site. The text alternative is a brief description of the link target.

![]()

<a href="http://w3.org"> <img src="images/w3c_home.png" width="72" height="48" alt="W3C home"> </a>

In this example, a link contains a print preview icon. The link points to a version of the page with a print stylesheet applied. The text alternative is a brief description of the link target.

![]()

<a href="preview.html"> <img src="images/preview.png" width="32" height="30" alt="Print preview."> </a>

In this example, a button contains a search icon. The button submits a search form. The text alternative is a brief description of what the button does.

![]()

<button> <img src="images/search.png" width="74" height="29" alt="Search"> </button>

In this example, a company logo for the PIP Corporation has been split into the following two images,

the first containing the word PIP and the second with the abbreviated word CO. The images are the

sole content of a link to the PIPCO home page. In this case a brief description of the link target is provided.

As the images are presented to the user as a single entity the text alternative PIP CO home is in the

alt attribute of the first image.

<a href="pipco-home.html"> <img src="pip.gif" alt="PIP CO home"><img src="co.gif" alt=""> </a>

Users can benefit when content is presented in graphical form, for example as a flowchart, a diagram, a graph, or a map showing directions. Users also benefit when content presented in a graphical form is also provided in a textual format, these users include those who are unable to view the image (e.g. because they have a very slow connection, or because they are using a text-only browser, or because they are listening to the page being read out by a hands-free automobile voice Web browser, or because they have a visual impairment and use an assistive technology to render the text to speech).

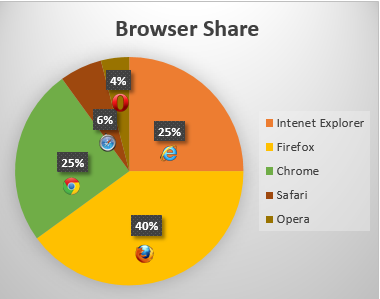

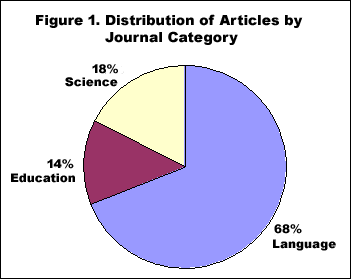

In the following example we have an image of a pie chart, with text in the alt attribute

representing the data shown in the pie chart:

<img src="piechart.gif" alt="Pie chart: Browser Share - Internet Explorer 25%, Firefox 40%, Chrome 25%, Safari 6% and Opera 4%.">

In the case where an image repeats the previous paragraph in graphical form. The alt attribute content labels the image.

<p>According to a recent study Firefox has a 40% browser share, Internet Explorer has 25%, Chrome has 25%, Safari has 6% and Opera has 4%.</p> <p><img src="piechart.gif" alt="Pie chart representing the data in the previous paragraph."></p>

It can be seen that when the image is not available, for example because the src attribute value is incorrect, the text alternative provides the user with a brief description of the image content:

In cases where the text alternative is lengthy, more than a sentence or two, or would benefit from

the use of structured markup, provide a brief description or label using the alt

attribute, and an associated text alternative.

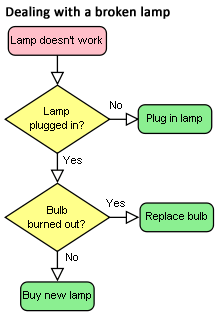

Here's an example of a flowchart image, with a short text alternative

included in the alt attribute, in this case the text alternative is a description of the link target

as the image is the sole content of a link. The link points to a description, within the same document, of the

process represented in the flowchart.

<a href="#desc"><img src="flowchart.gif" alt="Flowchart: Dealing with a broken lamp."></a> ... ... <div id="desc"> <h2>Dealing with a broken lamp</h2> <ol> <li>Check if it's plugged in, if not, plug it in.</li> <li>If it still doesn't work; check if the bulb is burned out. If it is, replace the bulb.</li> <li>If it still doesn't work; buy a new lamp.</li> </ol> </div>

In this example, there is an image of a chart. It would be inappropriate to provide the information depicted in

the chart as a plain text alternative in an alt attribute as the information is a data set. Instead a

structured text alternative is provided below the image in the form of a data table using the data that is represented

in the chart image.

Indications of the highest and lowest rainfall for each season have been included in the table, so trends easily identified in the chart are also available in the data table.

| United Kingdom | Japan | Australia | |

|---|---|---|---|

| Spring | 5.3 (highest) | 2.4 | 2 (lowest) |

| Summer | 4.5 (highest) | 3.4 | 2 (lowest) |

| Autumn | 3.5 (highest) | 1.8 | 1.5 (lowest) |

| Winter | 1.5 (highest) | 1.2 | 1 (lowest) |

<img src="rainchart.gif" alt="Bar chart: Average rainfall in millimetres by Country and Season."> <table> <caption>Rainfall in millimetres by Country and Season.</caption> <tr><td><th scope="col">UK <th scope="col">Japan<th scope="col">Australia</tr> <tr><th scope="row">Spring <td>5.5 (highest)<td>2.4 <td>2 (lowest)</tr> <tr><th scope="row">Summer <td>4.5 (highest)<td>3.4<td>2 (lowest)</tr> <tr><th scope="row">Autumn <td>3.5 (highest) <td>1.8 <td>1.5 (lowest)</tr> <tr><th scope="row">Winter <td>1.5 (highest) <td>1.2 <td>1 lowest</tr> </table>

Sometimes, an image only contains text, and the purpose of the image

is to display text using visual effects and /or fonts. It is strongly

recommended that text styled using CSS be used, but if this is not possible, provide

the same text in the alt attribute as is in the image.

This example shows an image of the text "Get Happy!" written in a fancy multi colored freehand style. The image makes up the content of a heading. In this case the text alternative for the image is "Get Happy!".

<h1><img src="gethappy.gif" alt="Get Happy!"></h1>

In this example we have an advertising image consisting of text, the phrase "The BIG sale" is repeated 3 times, each time the text gets smaller and fainter, the last line reads "...ends Friday" In the context of use, as an advertisement, it is recommended that the image's text alternative only include the text "The BIG sale" once as the repetition is for visual effect and the repetition of the text for users who cannot view the image is unnecessary and could be confusing.

<p><img src="sale.gif" alt="The BIG sale ...ends Friday."></p>

In situations where there is also a photo or other graphic along with the image of text, ensure that the words in the image text are included in the text alternative, along with any other description of the image that conveys meaning to users who can view the image, so the information is also available to users who cannot view the image.

When an image is used to represent a character that cannot otherwise be represented in Unicode, for example gaiji, itaiji, or new characters such as novel currency symbols, the text alternative should be a more conventional way of writing the same thing, e.g. using the phonetic hiragana or katakana to give the character's pronunciation.

In this example from 1997, a new-fangled currency symbol that looks like a curly E with two bars in the middle instead of one is represented using an image. The alternative text gives the character's pronunication.

Only ![]() 5.99!

5.99!

<p>Only <img src="euro.png" alt="euro ">5.99!

An image should not be used if Unicode characters would serve an identical purpose. Only when the text cannot be directly represented using Unicode, e.g. because of decorations or because the character is not in the Unicode character set (as in the case of gaiji), would an image be appropriate.

If an author is tempted to use an image because their default system font does not support a given character, then Web Fonts are a better solution than images.

An illuminated manuscript might use graphics for some of its letters. The text alternative in such a situation is just the character that the image represents.

![]() nce upon a time and a long long time ago...

nce upon a time and a long long time ago...

<p><img src="initials/fancyO.png" alt="O">nce upon a time and a long long time ago...

Sometimes, an image consists of a graphics such as a chart and associated text. In this case it is recommended that the text in the image is included in the text alternative.

Consider an image containing a pie chart and associated text. It is recommended wherever possible to provide any associated text as text, not an image of text. If this is not possible include the text in the text alternative along with the pertinent information conveyed in the image.

<p><img src="figure1.gif" alt="Figure 1. Distribution of Articles by Journal Category. Pie chart: Language=68%, Education=14% and Science=18%."></p>

Here's another example of the same pie chart image,

showing a short text alternative included in the alt attribute

and a longer text alternative in text. The figure and figcaption

elements are used to associate the longer text alternative with the image. The alt attribute is used

to label the image.

<figure> <img src="figure1.gif" alt="Figure 1"> <figcaption><strong>Figure 1.</strong> Distribution of Articles by Journal Category. Pie chart: Language=68%, Education=14% and Science=18%.</figcaption> </figure>

The advantage of this method over the previous example is that the text alternative

is available to all users at all times. It also allows structured mark up to be used in the text

alternative, where as a text alternative provided using the alt attribute does not.

An image that isn't discussed directly by the surrounding text but still has

some relevance can be included in a page using the img element. Such images

are more than mere decoration, they may augment the themes or subject matter of the page

content and so still form part of the content. In these cases, it is recommeneded that a

text alternative be provided.

Here is an example of an image closely related to the subject matter of the page content

but not directly discussed. An image of a painting inspired by a poem, on a page reciting that poem.

The following snippet shows an example. The image is a painting titled the "Lady of Shallot", it is

inspired by the poem and its subject matter is derived from the poem. Therefore it is strongly

recommended that a text alternative is provided. There is a short description of the content of

the image in the alt attribute and

a link below the image to a longer description located at the bottom of the document. At the end

of the longer description there is also a link to further information about the painting.

<header> <h1>The Lady of Shalott</h1> <p>A poem by Alfred Lord Tennyson</p> </header> <img src="shalott.jpeg" alt="Painting of a young woman with long hair, sitting in a wooden boat. "> <p><a href="#des">Description of the painting</a>.</p> <!-- Full Recitation of Alfred, Lord Tennyson's Poem. --> ... ... ... <p id="des">The woman in the painting is wearing a flowing white dress. A large piece of intricately patterned fabric is draped over the side. In her right hand she holds the chain mooring the boat. Her expression is mournful. She stares at a crucifix lying in front of her. Beside it are three candles. Two have blown out. <a href="http://bit.ly/5HJvVZ">Further information about the painting</a>.</p>

In many cases, the image is actually just supplementary, and its presence merely reinforces the

surrounding text. In these cases, the alt attribute must be

present but its value must be the empty string.

In general, an image falls into this category if removing the image doesn't make the page any less useful, but including the image makes it a lot easier for users of visual browsers to understand the concept.

It is not always easy to write a useful text alternative for an image, another option is to provide a link to a description or further information about the image when one is available.

In this example of the same image, there is a short text alternative included in the alt attribute, and

there is a link after the image. The link points to a page containing information about the painting.

The Lady of Shalott

A poem by Alfred Lord Tennyson.

Full recitation of Alfred, Lord Tennyson's poem.

<header><h1>The Lady of Shalott</h1> <p>A poem by Alfred Lord Tennyson</p></header> <figure> <img src="shalott.jpeg" alt="Painting of a woman in a white flowing dress, sitting in a small boat."> <p><a href="http://bit.ly/5HJvVZ">About this painting.</a></p> </figure> <!-- Full Recitation of Alfred, Lord Tennyson's Poem. -->

Purely decorative images are visual enhancements, decorations or embellishments that provide no function or information beyond aesthetics to users who can view the images.

Mark up purely decorative images so they can be ignored by assistive technology by using an empty alt

attribute (alt=""). While it is not unacceptable to include decorative images inline,

it is recommended if they are purely decorative to include the image using CSS.

Here's an example of an image being used as a decorative banner for a person's blog, the image offers no information

and so an empty alt attribute is used.

![]()

Clara's Blog

Welcome to my blog...

<header> <div><img src="border.gif" alt="" width="400" height="30"></div> <h1>Clara's Blog</h1> </header> <p>Welcome to my blog...</p>

When images are used inline as part of the flow of text in a sentence, provide a word or phrase as a text alternative which makes sense in the context of the sentence it is apart of.

I ![]() you.

you.

I <img src="heart.png" alt="love"> you.

My ![]() breaks.

breaks.

My <img src="heart.png" alt="heart"> breaks.

When a picture has been sliced into smaller image files that are then displayed

together to form the complete picture again, include a text alternative for one

of the images using the alt attribute as per the relevant relevant

guidance for the picture as a whole, and then include an empty alt

attribute on the other images.

In this example, a picture representing a company logo for the PIP Corporation

has been split into two pieces, the first containing the letters "PIP" and the second with the word "CO".

The text alternatve PIP CO is in the alt attribute of the first image.

<img src="pip.gif" alt="PIP CO"><img src="co.gif" alt="">

In the following example, a rating is shown as three filled

stars and two empty stars. While the text alternative could have

been "★★★☆☆", the author has

instead decided to more helpfully give the rating in the form "3

out of 5". That is the text alternative of the first image, and the

rest have empty alt attributes.

![]()

<p>Rating: <meter max=5 value=3> <img src="1" alt="3 out of 5"> <img src="1" alt=""><img src="1" alt=""> <img src="0" alt=""><img src="0" alt=""> </meter></p>

img element has a usemap attribute which references a map element containing

area elements that have href attributes, the img is considered to be interactive content.

In such cases, always provide a text alternative for the image using the alt attribute.

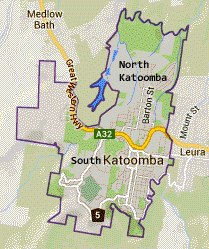

Consider the following image which is a map of Katoomba, it has 2 interactive regions corresponding to the areas of North and South Katoomba:

The text alternative is a brief description of the image. The alt attribute on each

of the area elements provides text describing the content of the target page of each linked region:

<p>View houses for sale in North Katoomba or South Katoomba:</p> <p><img src="imagemap.png" width="209" alt="Map of Katoomba" height="249" usemap="#Map"> <map name="Map"> <area shape="poly" coords="78,124,124,10,189,29,173,93,168,132,136,151,110,130" href="north.html" alt="Houses in North Katoomba"> <area shape="poly" coords="66,63,80,135,106,138,137,154,167,137,175,133,144,240,49,223,17,137,17,61" alt="Houses in South Katoomba" href="south.html"> </map>

Generally, image maps should be used instead of slicing an image for links.

Sometimes, when you create a composite picture from multiple images, you may wish to

link one or more of the images. Provide an alt attribute

for each linked image to describe the purpose of the link.

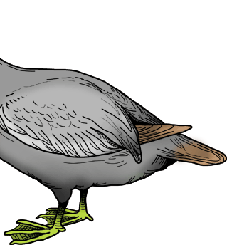

In the following example, a composite picture is used to represent a "crocoduck"; a fictional creature which defies evolutionary principles by being part crocodile and part duck. You are asked to interact with the crocoduck, but you need to exercise caution...

<h1>The crocoduck</h1> <p>You encounter a strange creature called a "crocoduck". The creature seems angry! Perhaps some friendly stroking will help to calm it, but be careful not to stroke any crocodile parts. This would just enrage the beast further.</p> <a href="?stroke=head"><img src="crocoduck1.png" alt="Stroke crocodile's angry, chomping head"></a> <a href="?stroke=body"><img src="crocoduck2.png" alt="Stroke duck's soft, feathery body"></a>

Images of pictures or graphics include visual representations of objects, people, scenes, abstractions, etc. This non-text content, [WCAG] can convey a significant amount of information visually or provide a specific sensory experience, [WCAG] to a sighted person. Examples include photographs, paintings, drawings and artwork.

An appropriate text alternative for a picture is a brief description, or name [WCAG]. As in all text alternative authoring decisions, writing suitable text alternatives for pictures requires

human judgment. The text value is subjective to the context where the image is used and the page author's writing style. Therefore,

there is no single 'right' or 'correct' piece of alt text for any particular image. In addition to providing a short text

alternative that gives a brief description of the non-text content, also providing supplemental content through another means when

appropriate may be useful.

This first example shows an image uploaded to a photo-sharing site. The photo is of a cat, sitting in the bath. The image has a

text alternative provided using the img element's alt attribute. It also has a caption provided by including

the img element in a figure element and using a figcaption element to identify the caption text.

Lola prefers a bath to a shower.

<figure> <img src="664aef.jpg" alt="Lola the cat sitting under an umbrella in the bath tub."> <figcaption>Lola prefers a bath to a shower.</figcaption> </figure>

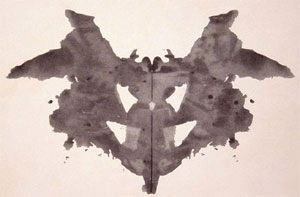

This example is of an image that defies a complete description, as the subject of the image is open to interpretation.

The image has a text alternative in the alt attribute which gives users who cannot view the image a sense

of what the image is. It also has a caption provided by including the img element in a figure

element and using a figcaption element to identify the caption text.

The first of the ten cards in the Rorschach test.

<figure> <img src="Rorschach1.jpg" alt="An abstract, freeform, vertically symmetrical, black inkblot on a light background."> <figcaption>The first of the ten cards in the Rorschach test.</figcaption> </figure>

Webcam images are static images that are automatically updated periodically. Typically the images are from a fixed viewpoint, the images may update on the page automatically as each new image is uploaded from the camera or the user may be required to refresh the page to view an updated image. Examples include traffic and weather cameras.

This example is fairly typical; the title and a time stamp are included in the image, automatically generated

by the webcam software. It would be better if the text information was not included in the image, but as it is part

of the image, include it part of the text alternative. A caption is also provided using the figure

and figcaption elements. As the image is provided to give a visual indication of the current weather near a building,

a link to a local weather forecast is provided, as with automatically generated and uploaded webcam images it may be impractical to

provide such information as a text alternative.

The text of the alt attribute includes a prose version of the timestamp, designed to make the text more

understandable when announced by text to speech software. The text alternative also includes a description of some aspects

of what can be seen in the image which are unchanging, although weather conditions and time of day change.

View from the top of Sopwith house, looking towards North Kingston. This image is updated every hour.

View the latest weather details for Kingston upon Thames.

<figure> <img src="webcam1.jpg" alt="Sopwith house weather cam. Taken on the 21/04/10 at 11:51 and 34 seconds. In the foreground are the safety rails on the flat part of the roof. Nearby there are low rise industrial buildings, beyond are blocks of flats. In the distance there's a church steeple."> <figcaption>View from Sopwith house, looking towards north Kingston. This image is updated every hour.</figcaption> </figure> <p>View the <a href="http://news.bbc.co.uk/weather/forecast/4296?area=Kingston">latest weather details</a> for Kingston upon Thames.</p>

In some cases an image is included in a published document, but the author is unable to provide an appropriate text alternative.

In such cases the minimum requirement is to provide a caption for the image using the figure and figcaption

elements under the following conditions:

img element is in a figure element

figure element contains a figcaption element

figcaption element contains content other than inter-element whitespace

figcaption element and its descendants, the figure

element has no Text node descendants other than inter-element whitespace, and no

embedded content descendant other than the img element.

In other words, the only content of the figure is an img element and a figcaption

element, and the figcaption element must include (caption) content.

Such cases are to be kept to an absolute

minimum. If there is even the slightest possibility of the author

having the ability to provide real alternative text, then it would

not be acceptable to omit the alt

attribute.

In this example, a person uploads a photo, as part of a bulk upoad of many images, to a photo sharing site. The user has not provided a text alternative or a caption for the image. The site's authoring tool inserts a caption automatically using whatever useful information it has for the image. In this case it's the file name and date the photo was taken.

clara.jpg, taken on 12/11/2010.

<figure> <img src="clara.jpg"> <figcaption>clara.jpg, taken on 12/11/2010.</figcaption> </figure>

Notice that even in this example, as much useful information

as possible is still included in the figcaption element.

In this second example, a person uploads a photo to a photo sharing site. She has provided

a caption for the image but not a text alternative. This may be because the site does not provide users with the ability

to add a text alternative in the alt attribute.

Eloisa with Princess Belle

<figure> <img src="elo.jpg"> <figcaption>Eloisa with Princess Belle</figcaption> </figure>

Sometimes the entire point of the image is that a textual description is not available, and the user is to provide the description. For example, software that displays images and asks for alternative text precisely for the purpose of then writing a page with correct alternative text. Such a page could have a table of images, like this:

<table> <tr><tr> <th> Image <th> Description<tr> <td> <figure> <img src="2421.png"> <figcaption>Image 640 by 100, filename 'banner.gif'</figcaption> </figure> <td> <input name="alt2421"> <tr> <td> <figure> <img src="2422.png"> <figcaption>Image 200 by 480, filename 'ad3.gif'</figcaption> </figure> <td> <input name="alt2422"> </table>

Since some users cannot use images at all (e.g. because they are blind) the

alt attribute is only allowed to be omitted when no text

alternative is available and none can be made available, as in the above examples.

Generally authors should avoid using img elements

for purposes other than showing images.

If an img element is being used for purposes other

than showing an image, e.g. as part of a service to count page

views, use an empty alt attribute.

An example of an img element used to collect web page statistics.

The alt attribute is empty as the image has no meaning.

<img src="http://server3.stats.com/count.pl?NeonMeatDream.com" width="0" height="0" alt="">

It is recommended for the example use above the width and

height attributes be set to zero.

Another example use is when an image such as a spacer.gif is used to aid positioning of content.

The alt attribute is empty as the image has no meaning.

<img src="spacer.gif" width="10" height="10" alt="">

It is recommended that that CSS be used to position content instead of img elements.

An icon is usually a simple picture representing a program, action, data file or a concept. Icons are intended to help users of visual browsers to recognize features at a glance.

Use an empty alt attribute when an icon is supplemental to

text conveying the same meaning.

In this example, we have a link pointing to a site's home page, the link contains a

house icon image and the text "home". The image has an empty alt text.

Where images are used in this way, it would also be appropriate to add the image using CSS

![]()

<a href="home.html"><img src="home.gif" width="15" height="15" alt="">Home</a>

#home:before

{

content: url(home.png);

}

<a href="home.html" id="home">Home</a>

In this example, there is a warning message, with a warning icon. The word "Warning!" is in emphasized

text next to the icon. As the information conveyed by the icon is redundant the img element is given an an empty alt attribute.

![]() Warning! Your session is about to expire.

Warning! Your session is about to expire.

<p><img src="warning.png" width="15" height="15" alt=""> <strong>Warning!</strong> Your session is about to expire</p>

When an icon conveys additional information not available in text, provide a text alternative.

In this example, there is a warning message, with a warning icon. The icon emphasizes the importance of the message and identifies it as a particular type of content.

![]() Your session is about to expire.

Your session is about to expire.

<p><img src="warning.png" width="15" height="15" alt="Warning!"> Your session is about to expire</p>

Many pages include logos, insignia, flags, or emblems, which stand for a company, organization, project, band, software package, country, or other entity. What can be considered as an appropriate text alternative depends upon, like all images, the context in which the image is being used and what function it serves in the given context.

If a logo is the sole content of a link, provide a brief description of the link target in the alt attribute.

This example illustrates the use of the HTML5 logo as the sole content of a link to the HTML specification.

<a href="http://dev.w3.org/html5/spec/spec.html"> <img src="HTML5_Logo.png" alt="HTML 5.1 specification"></a>

If a logo is being used to represent the entity, e.g. as a page heading, provide the name of the entity being represented by the logo as the text alternative.

This example illustrates the use of the WebPlatform.org logo being used to represent itself.

and other developer resources

and other developer resources

<h2><img src="images/webplatform.png" alt="WebPlatform.org"> and other developer resources<h2>

If a logo is being used next to the name of the what that it represents, then the logo is supplemental.

Include an empty alt attribute as the text alternative is already provided.

This example illustrates the use of a logo next to the name of the organization it represents.

WebPlatform.org

WebPlatform.org

<img src="images/webplatform1.png" alt=""> WebPlatform.org

If the logo is used alongside text discussing the subject or entity the logo represents, then provide a text alternative which describes the logo.

This example illustrates the use of a logo next to text discussing the subject the logo represents.

HTML5 is a language for structuring and presenting content for the World Wide Web, a core technology of the Internet. It is the latest revision of the HTML standard (originally created in 1990 and most recently standardized as HTML4 in 1997) and currently remains under development. Its core aims have been to improve the language with support for the latest multimedia while keeping it easily readable by humans and consistently understood by computers and devices (web browsers, parsers etc.).

<p><img src="HTML5_Logo.png" alt="HTML5 logo: Shaped like a shield with the text 'HTML' above and the numeral '5' prominent on the face of the shield."></p> Information about HTML5

CAPTCHA stands for "Completely Automated Public Turing test to tell Computers and Humans Apart". CAPTCHA images are used for security purposes to confirm that content is being accessed by a person rather than a computer. This authentication is done through visual verification of an image. CAPTCHA typically presents an image with characters or words in it that the user is to re-type. The image is usually distorted and has some noise applied to it to make the characters difficult to read.

To improve the accessibility of CAPTCHA provide text alternatives that identify and describe the purpose of the image, and provide alternative forms of the CAPTCHA using output modes for different types of sensory perception. For instance provide an audio alternative along with the visual image. Place the audio option right next to the visual one. This helps but is still problematic for people without sound cards, the deaf-blind, and some people with limited hearing. Another method is to include a form that asks a question along with the visual image. This helps but can be problematic for people with cognitive impairments.

It is strongly recommended that alternatives to CAPTCHA be used, as all forms of CAPTCHA introduce unacceptable barriers to entry for users with disabilities. Further information is available in Inaccessibility of CAPTCHA.

This example shows a CAPTCHA test which uses a distorted image of text. The text alternative in the

alt attribute provides instructions for a user in the case where she cannot access the image content.

Example code:

<img src="captcha.png" alt="If you cannot view this image an audio challenge is provided."> <!-- audio CAPTCHA option that allows the user to listen and type the word --> <!-- form that asks a question -->

Markup generators (such as WYSIWYG authoring tools) should, wherever possible, obtain a text alternative from their users. However, it is recognized that in many cases, this will not be possible.

For images that are the sole contents of links, markup generators should examine the link target to determine the title of the target, or the URL of the target, and use information obtained in this manner as the text alternative.

For images that have captions, markup generators should use the

figure and figcaption elements to provide the

image's caption.

As a last resort, implementors should either set the alt attribute to the empty string, under

the assumption that the image is a purely decorative image that

doesn't add any information but is still specific to the surrounding

content, or omit the alt attribute

altogether, under the assumption that the image is a key part of the

content.

Markup generators may specify a generator-unable-to-provide-required-alt

attribute on img elements for which they have been

unable to obtain a text alternative and for which they have therefore

omitted the alt attribute. The

value of this attribute must be the empty string. Documents

containing such attributes are not conforming, but conformance

checkers will silently

ignore this error.

This is intended to avoid markup generators from

being pressured into replacing the error of omitting the alt attribute with the even more

egregious error of providing phony text alternatives, because

state-of-the-art automated conformance checkers cannot distinguish

phony text alternatives from correct text alternatives.

Markup generators should generally avoid using the image's own file name as the text alternative. Similarly, markup generators should avoid generating text alternatives from any content that will be equally available to presentation user agents (e.g. Web browsers).

This is because once a page is generated, it will typically not be updated, whereas the browsers that later read the page can be updated by the user, therefore the browser is likely to have more up-to-date and finely-tuned heuristics than the markup generator did when generating the page.

A conformance checker must report the lack of an alt attribute as an error unless one of

the conditions listed below applies:

The img element is in a figure

element that satisfies the

conditions described above.

The img element has a (non-conforming) generator-unable-to-provide-required-alt

attribute whose value is the empty string. A conformance checker

that is not reporting the lack of an alt attribute as an error must also not

report the presence of the empty generator-unable-to-provide-required-alt

attribute as an error. (This case does not represent a case where

the document is conforming, only that the generator could not

determine appropriate alternative text — validators are not

required to show an error in this case, because such an error might

encourage markup generators to include bogus alternative text

purely in an attempt to silence validators. Naturally, conformance

checkers may report the lack of an alt attribute as an error even in the

presence of the generator-unable-to-provide-required-alt

attribute; for example, there could be a user option to report

all conformance errors even those that might be the more

or less inevitable result of using a markup generator.)

iframe elementsrc - Address of the resourcesrcdoc - A document to render in the iframename - Name of nested browsing contextsandbox - Security rules for nested contentwidth - Horizontal dimensionheight - Vertical dimessionapplication,

document, img or

presentation.aria-* attributes

applicable to the allowed roles.interface HTMLIFrameElement : HTMLElement {

attribute DOMString src;

attribute DOMString srcdoc;

attribute DOMString name;

[PutForwards=value] readonly attribute DOMSettableTokenList sandbox;

attribute DOMString width;

attribute DOMString height;

readonly attribute Document? contentDocument;

readonly attribute WindowProxy? contentWindow;

};

The iframe element represents a nested browsing

context.

The src attribute gives the address of a page

that the nested browsing context is to contain. The attribute, if present, must be a

valid non-empty URL potentially surrounded by spaces.

The srcdoc attribute gives the content of

the page that the nested browsing context is to contain. The value of the attribute

is the source of an iframe srcdoc

document.

For iframe elements in HTML documents, the srcdoc attribute, if present, must have a value using the

HTML syntax that consists of the following syntactic components, in the given order:

html element.For iframe elements in XML documents, the srcdoc attribute, if present, must have a value that matches the

production labeled document in the XML specification. [XML]

In the HTML syntax, authors need only remember to use """ (U+0022) characters to wrap the attribute contents and then to escape all """ (U+0022) and U+0026 AMPERSAND (&) characters, and to specify the sandbox attribute, to ensure safe embedding of content.

Due to restrictions of the XHTML syntax, in XML the "<" (U+003C) character needs to be escaped as well. In order to prevent attribute-value normalization, some of XML's whitespace characters — specifically "tab" (U+0009), "LF" (U+000A), and "CR" (U+000D) — also need to be escaped. [XML]

If the src attribute and the srcdoc attribute are both specified together, the srcdoc attribute takes priority. This allows authors to provide

a fallback URL for legacy user agents that do not support the srcdoc attribute.

When an iframe element is inserted

into a document, the user agent must create a nested browsing context, and

then process the iframe attributes for the "first time".

When an iframe element is removed

from a document, the user agent must discard the nested browsing context.

This happens without any unload events firing

(the nested browsing context and its Document are discarded, not unloaded).

Whenever an iframe element with a nested browsing context has its

srcdoc attribute set, changed, or removed, the user agent

must process the iframe attributes.

Similarly, whenever an iframe element with a nested browsing context

but with no srcdoc attribute specified has its src attribute set, changed, or removed, the user agent must

process the iframe attributes.

When the user agent is to process the iframe attributes, it must run

the first appropriate steps from the following list:

srcdoc attribute is specifiedNavigate the element's child browsing context

to a resource whose Content-Type is text/html, whose URL

is about:srcdoc, and whose data consists of the value of the attribute. The

resulting Document must be considered an iframe srcdoc document.

src attribute

specified, and the user agent is processing the iframe's attributes for the "first

time"Queue a task to run the iframe load event steps.

The task source for this task is the DOM manipulation task source.

If the value of the src attribute is missing, or its value is the empty string,

let url be the string "about:blank".

Otherwise, resolve the value of the src attribute, relative to the iframe element.

If that is not successful, then let url be the string

"about:blank". Otherwise, let url be the resulting

absolute URL.

If there exists an ancestor browsing context whose active document's address, ignoring fragment identifiers, is equal to url, then abort these steps.

Navigate the element's child browsing context to url.

Any navigation required of the user agent in the process

the iframe attributes algorithm must be completed as an explicit

self-navigation override and with the iframe element's document's

browsing context as the source browsing context.

Furthermore, if the active document of the element's child browsing context before such a navigation was not completely loaded at the time of the new navigation, then the navigation must be completed with replacement enabled.

Similarly, if the child browsing context's session history contained

only one Document when the process the iframe attributes

algorithm was invoked, and that was the about:blank Document created

when the child browsing context was created, then any navigation required of the user agent in that algorithm must be completed

with replacement enabled.

When a Document in an iframe is marked as completely

loaded, the user agent must synchronously run the iframe load event steps.

A load event is also fired at the

iframe element when it is created if no other data is loaded in it.

Each Document has an iframe load in progress flag and a mute

iframe load flag. When a Document is created, these flags must be unset for

that Document.

The iframe load event steps are as follows:

Let child document be the active document of the

iframe element's nested browsing context.

If child document has its mute iframe load flag set, abort these steps.

Set child document's iframe load in progress flag.

Fire a simple event named load at the

iframe element.

Unset child document's iframe load in progress flag.

This, in conjunction with scripting, can be used to probe the URL space of the local network's HTTP servers. User agents may implement cross-origin access control policies that are stricter than those described above to mitigate this attack, but unfortunately such policies are typically not compatible with existing Web content.

When the iframe's browsing context's active document is

not ready for post-load tasks, and when anything in the iframe is delaying the load event of the iframe's

browsing context's active document, and when the iframe's

browsing context is in the delaying load events

mode, the iframe must delay the load event of its document.

If, during the handling of the load event, the

browsing context in the iframe is again navigated, that will further delay the load event.

If, when the element is created, the srcdoc attribute is not set, and the src attribute is either also not set or set but its value cannot be

resolved, the browsing context will remain at the initial

about:blank page.

If the user navigates away from this page, the

iframe's corresponding WindowProxy object will proxy new

Window objects for new Document objects, but the src attribute will not change.

The name attribute, if present, must be a

valid browsing context name. The given value is used to name the nested

browsing context. When the browsing context is created, if the attribute

is present, the browsing context name must be set to the value of this attribute;

otherwise, the browsing context name must be set to the empty string.

Whenever the name attribute is set, the nested

browsing context's name must be changed to

the new value. If the attribute is removed, the browsing context name must be set to

the empty string.

The sandbox attribute, when specified,

enables a set of extra restrictions on any content hosted by the iframe. Its value

must be an unordered set of unique space-separated tokens that are ASCII

case-insensitive. The allowed values are allow-forms, allow-pointer-lock, allow-popups, allow-same-origin, allow-scripts, and allow-top-navigation.

When the attribute is set, the content is treated as being from a unique origin,

forms, scripts, and various potentially annoying APIs are disabled, links are prevented from

targeting other browsing contexts, and plugins are secured.

The allow-same-origin keyword causes

the content to be treated as being from its real origin instead of forcing it into a unique

origin; the allow-top-navigation

keyword allows the content to navigate its top-level browsing context;

and the allow-forms, allow-pointer-lock, allow-popups and allow-scripts keywords re-enable forms, the

pointer lock API, popups, and scripts respectively. [POINTERLOCK]

Setting both the allow-scripts and allow-same-origin keywords together when the

embedded page has the same origin as the page containing the iframe

allows the embedded page to simply remove the sandbox

attribute and then reload itself, effectively breaking out of the sandbox altogether.

These flags only take effect when the nested browsing context of

the iframe is navigated. Removing them, or removing the

entire sandbox attribute, has no effect on an

already-loaded page.

Potentially hostile files should not be served from the same server as the file

containing the iframe element. Sandboxing hostile content is of minimal help if an

attacker can convince the user to just visit the hostile content directly, rather than in the

iframe. To limit the damage that can be caused by hostile HTML content, it should be

served from a separate dedicated domain. Using a different domain ensures that scripts in the

files are unable to attack the site, even if the user is tricked into visiting those pages

directly, without the protection of the sandbox

attribute.

When an iframe element with a sandbox

attribute has its nested browsing context created (before the initial

about:blank Document is created), and when an iframe

element's sandbox attribute is set or changed while it

has a nested browsing context, the user agent must parse the sandboxing directive using the attribute's value as the input, and the iframe element's nested browsing context's

iframe sandboxing flag set as the output.

When an iframe element's sandbox

attribute is removed while it has a nested browsing context, the user agent must

empty the iframe element's nested browsing context's

iframe sandboxing flag set as the output.

In this example, some completely-unknown, potentially hostile, user-provided HTML content is embedded in a page. Because it is served from a separate domain, it is affected by all the normal cross-site restrictions. In addition, the embedded page has scripting disabled, plugins disabled, forms disabled, and it cannot navigate any frames or windows other than itself (or any frames or windows it itself embeds).

<p>We're not scared of you! Here is your content, unedited:</p> <iframe sandbox src="http://usercontent.example.net/getusercontent.cgi?id=12193"></iframe>

It is important to use a separate domain so that if the attacker convinces the user to visit that page directly, the page doesn't run in the context of the site's origin, which would make the user vulnerable to any attack found in the page.

In this example, a gadget from another site is embedded. The gadget has scripting and forms enabled, and the origin sandbox restrictions are lifted, allowing the gadget to communicate with its originating server. The sandbox is still useful, however, as it disables plugins and popups, thus reducing the risk of the user being exposed to malware and other annoyances.

<iframe sandbox="allow-same-origin allow-forms allow-scripts"

src="http://maps.example.com/embedded.html"></iframe>

Suppose a file A contained the following fragment:

<iframe sandbox="allow-same-origin allow-forms" src=B></iframe>

Suppose that file B contained an iframe also:

<iframe sandbox="allow-scripts" src=C></iframe>

Further, suppose that file C contained a link:

<a href=D>Link</a>

For this example, suppose all the files were served as text/html.

Page C in this scenario has all the sandboxing flags set. Scripts are disabled, because the

iframe in A has scripts disabled, and this overrides the allow-scripts keyword set on the

iframe in B. Forms are also disabled, because the inner iframe (in B)

does not have the allow-forms keyword

set.

Suppose now that a script in A removes all the sandbox attributes in A and B.

This would change nothing immediately. If the user clicked the link in C, loading page D into the

iframe in B, page D would now act as if the iframe in B had the allow-same-origin and allow-forms keywords set, because that was the

state of the nested browsing context in the iframe in A when page B was

loaded.

Generally speaking, dynamically removing or changing the sandbox attribute is ill-advised, because it can make it quite

hard to reason about what will be allowed and what will not.

The iframe element supports dimension attributes for cases where the

embedded content has specific dimensions (e.g. ad units have well-defined dimensions).

An iframe element never has fallback content, as it will always

create a nested browsing context, regardless of whether the specified initial

contents are successfully used.

Descendants of iframe elements represent nothing. (In legacy user agents that do

not support iframe elements, the contents would be parsed as markup that could act as

fallback content.)

When used in HTML documents, the allowed content model

of iframe elements is text, except that invoking the HTML fragment parsing

algorithm with the iframe element as the context element and the text contents as the input must result in a list of nodes that are all phrasing content,

with no parse errors having occurred, with no script

elements being anywhere in the list or as descendants of elements in the list, and with all the

elements in the list (including their descendants) being themselves conforming.

The iframe element must be empty in XML documents.

The HTML parser treats markup inside iframe elements as

text.

The IDL attributes src, srcdoc, name, and sandbox must reflect the respective

content attributes of the same name.

The contentDocument IDL attribute

must return the Document object of the active document of the

iframe element's nested browsing context, if any and if its

effective script origin is the same origin as the effective script

origin specified by the incumbent settings object, or null otherwise.

The contentWindow IDL attribute must

return the WindowProxy object of the iframe element's nested

browsing context, if any, or null otherwise.

Here is an example of a page using an iframe to include advertising from an

advertising broker:

<iframe src="http://ads.example.com/?customerid=923513721&format=banner"

width="468" height="60"></iframe>

embed elementsrctypewidthheightinterface HTMLEmbedElement : HTMLElement {

attribute DOMString src;

attribute DOMString type;

attribute DOMString width;

attribute DOMString height;

legacycaller any (any... arguments);

};

Depending on the type of content instantiated by the

embed element, the node may also support other

interfaces.

The embed element provides an integration point for an external (typically

non-HTML) application or interactive content.

The src attribute gives the address of the

resource being embedded. The attribute, if present, must contain a valid non-empty URL

potentially surrounded by spaces.

The type attribute, if present, gives the

MIME type by which the plugin to instantiate is selected. The value must be a

valid MIME type. If both the type attribute and

the src attribute are present, then the type attribute must specify the same type as the explicit Content-Type metadata of the resource given by the src attribute.

When the element is created with neither a src attribute

nor a type attribute, and when attributes are removed such

that neither attribute is present on the element anymore, and when the element has a media

element ancestor, and when the element has an ancestor object element that is

not showing its fallback content, any plugin instantiated for

the element must be removed, and the embed element then represents nothing.

An embed element is said to be potentially

active when the following conditions are all met simultaneously:

Document or was in a Document the last time the event loop

reached step 1.Document is fully active.src attribute set or a type attribute set (or both).src attribute is either absent or its value

is not the empty string.object element that is not showing its

fallback content.Whenever an embed element that was not potentially active becomes potentially active, and whenever a potentially active embed element that is

remaining potentially active and has its src attribute set, changed, or removed or its type attribute set, changed, or removed, the user agent must

queue a task using the embed task source to run the

embed element setup steps.

The embed element setup steps are as follows:

If another task has since been queued to run the

embed element setup steps for this element, then abort these steps.

src attribute setThe user agent must resolve the value of the element's

src attribute, relative to the element. If that is

successful, the user agent should fetch the resulting absolute

URL, from the element's browsing context scope origin if it has one. The task that

is queued by the networking task source once

the resource has been fetched must run the following steps:

If another task has since been queued to run

the embed element setup steps for this element, then abort these

steps.

Determine the type of the content being embedded, as follows (stopping at the first substep that determines the type):

If the element has a type attribute, and that

attribute's value is a type that a plugin supports, then the value of the

type attribute is the content's type.

Otherwise, if applying the URL parser algorithm to the URL of the specified resource (after any redirects) results in a parsed URL whose path component matches a pattern that a plugin supports, then the content's type is the type that that plugin can handle.

For example, a plugin might say that it can handle resources with path components that end with the four character string

".swf".

Otherwise, if the specified resource has explicit Content-Type metadata, then that is the content's type.

Otherwise, the content has no type and there can be no appropriate plugin for it.

If the previous step determined that the content's

type is image/svg+xml, then run the following substeps:

If the embed element is not associated with a nested browsing

context, associate the element with a newly created nested browsing

context, and, if the element has a name

attribute, set the browsing context name of the element's nested

browsing context to the value of this attribute.

Navigate the nested browsing context to

the fetched resource, with replacement enabled, and with the

embed element's document's browsing context as the source

browsing context. (The src attribute of the

embed element doesn't get updated if the browsing context gets further

navigated to other locations.)

The embed element now represents its associated

nested browsing context.

Otherwise, find and instantiate an appropriate plugin based on the content's type, and hand that plugin the

content of the resource, replacing any previously instantiated plugin for the element. The

embed element now represents this plugin instance.

Whether the resource is fetched successfully or not (e.g. whether the response code was a 2xx code or equivalent) must be ignored when determining the content's type and when handing the resource to the plugin.

This allows servers to return data for plugins even with error responses (e.g. HTTP 500 Internal Server Error codes can still contain plugin data).

Fetching the resource must delay the load event of the element's document.

src attribute setThe user agent should find and instantiate an appropriate plugin based on the

value of the type attribute. The embed

element now represents this plugin instance.

The embed element has no fallback content. If the user agent can't

find a suitable plugin when attempting to find and instantiate one for the algorithm above, then

the user agent must use a default plugin. This default could be as simple as saying "Unsupported

Format".

Whenever an embed element that was potentially